<!———

Work info

———>

Role:

Lead Product Designer

Timeline:

Jun 2023 - Apr 2024

Scope:

UI Design, UX Research and GTM collaboration

<!———

Context

———>

Evaluating the API required a developer. Most buyers weren't developers.

Teams wanted to evaluate Arabic and Indian-language speech APIs before committing to integration. The only way to do it was to write code. I designed a no-code web platform that removed that barrier — from zero to launch.

Outcomes

500+

active users within 24 hours of MVP launch

+8

enterprise customers onboarded

<3 min

taken for a buyer to evaluate the API

90% of language AI is built for European languages. NeuralSpace was built for everyone else.

NeuralSpace had strong speech APIs for Arabic and Indian languages — markets most AI companies ignored. The problem was access. Testing the API required writing code. Sales demos were slow, engineering-dependent, and hard to repeat. Non-technical buyers had no way to evaluate quality on their own.

VoiceAI was the product that closed that gap. As the lead product designer, I took it from early definition to MVP launch and into post-launch iteration — working daily with engineering and aligned with sales and marketing from day one.

<!———

Process

———>

I ran alignment sessions to decide what to cut, not just what to build.

Early sessions with the CEO, CTO, product lead, and engineering lead surfaced two competing instincts: ship broadly to show range, or ship narrowly to prove core value. I pushed for narrow. If we couldn't demonstrate accurate transcription clearly, adding more features would dilute the signal.

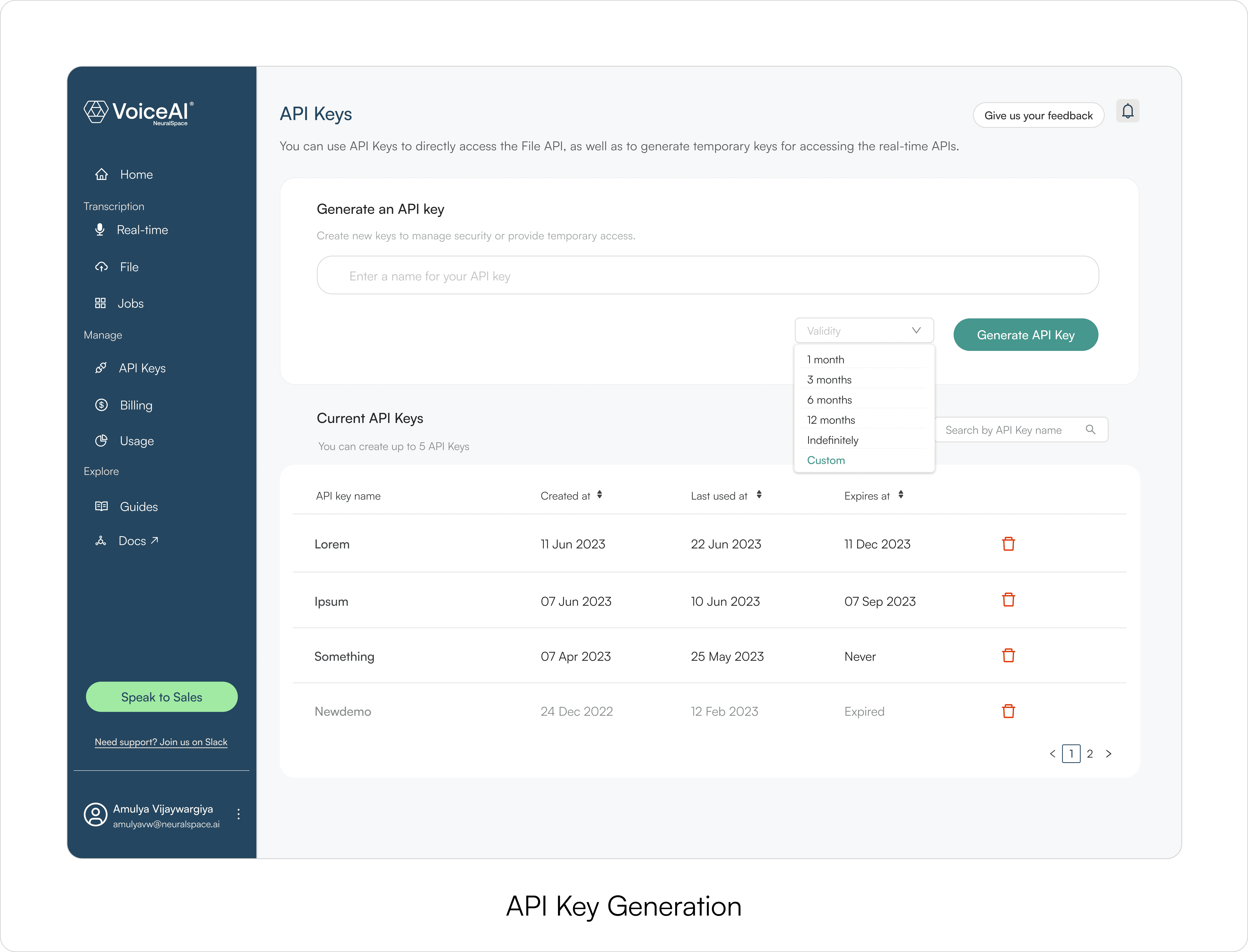

I brought sales and marketing in at this stage too. Their input shaped one important structural decision: API integration sat below the output, not above it. See the result first, then integrate.

<!———

MVP

———>

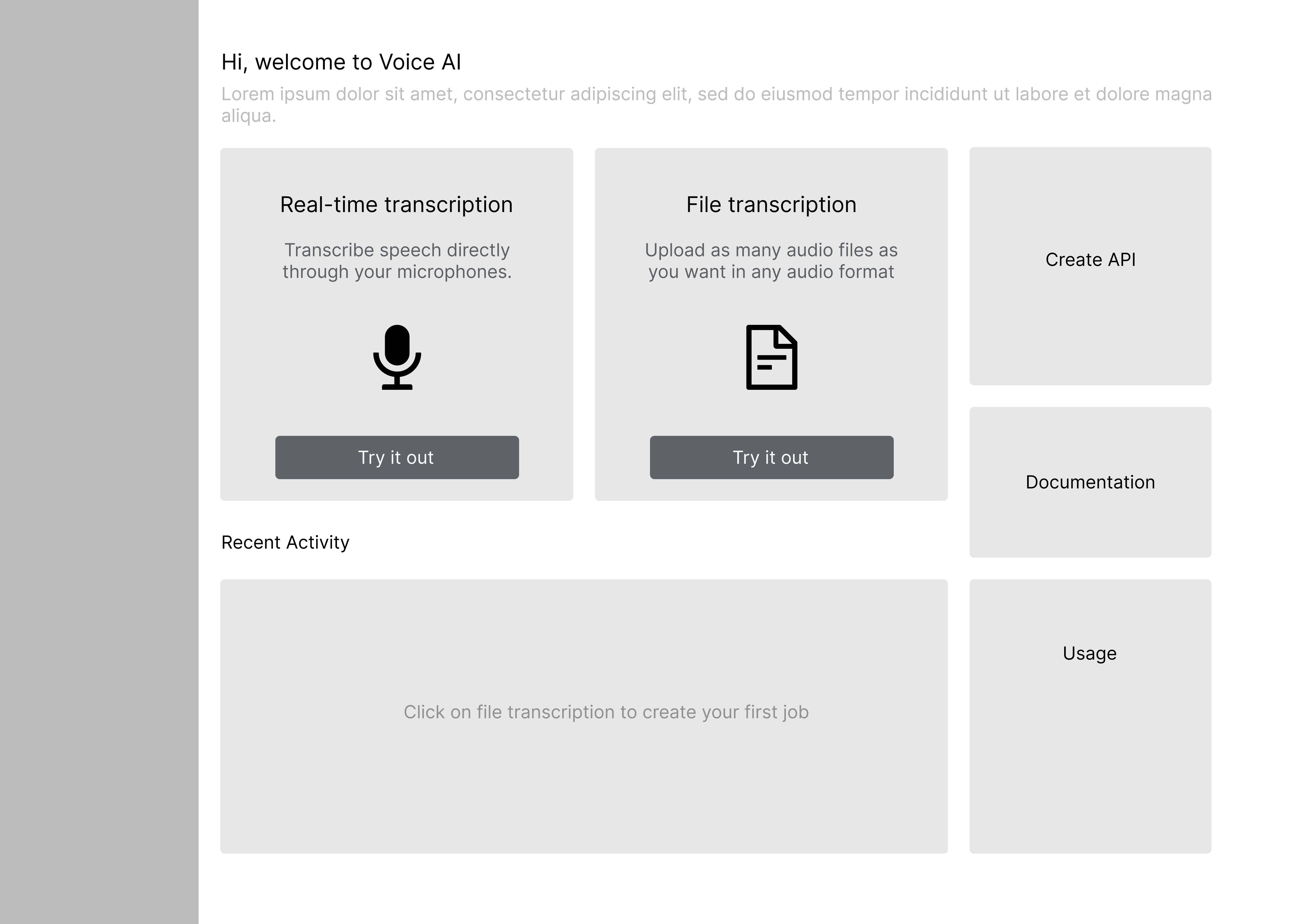

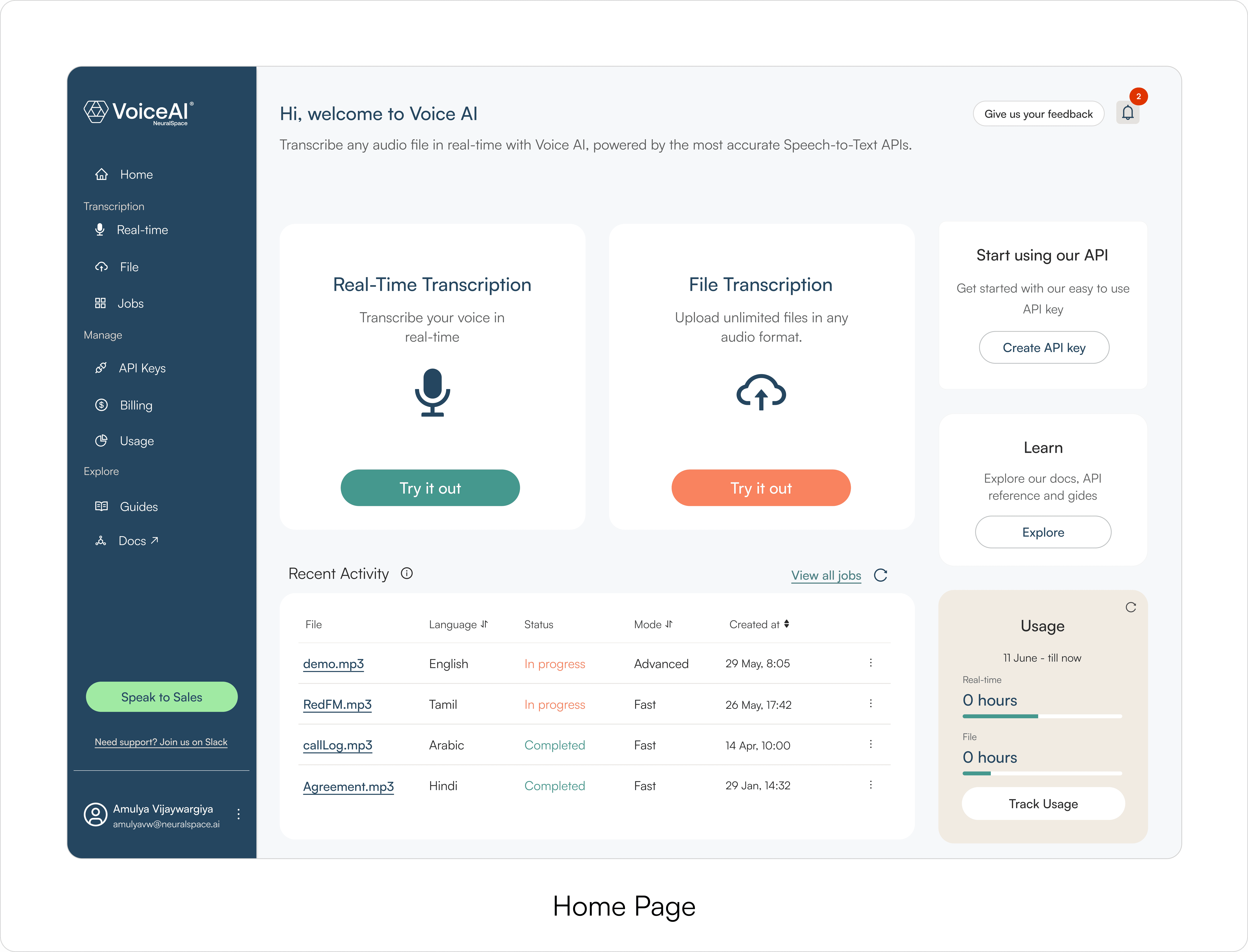

Lo-fi: most iteration happened on two screens

The homepage and the output screen got the most iteration — and for different reasons.

The homepage determined whether a buyer would start or leave. I tested three structures before landing on the final approach: two primary workflow entry points as the dominant visual, configuration options accessible but secondary.

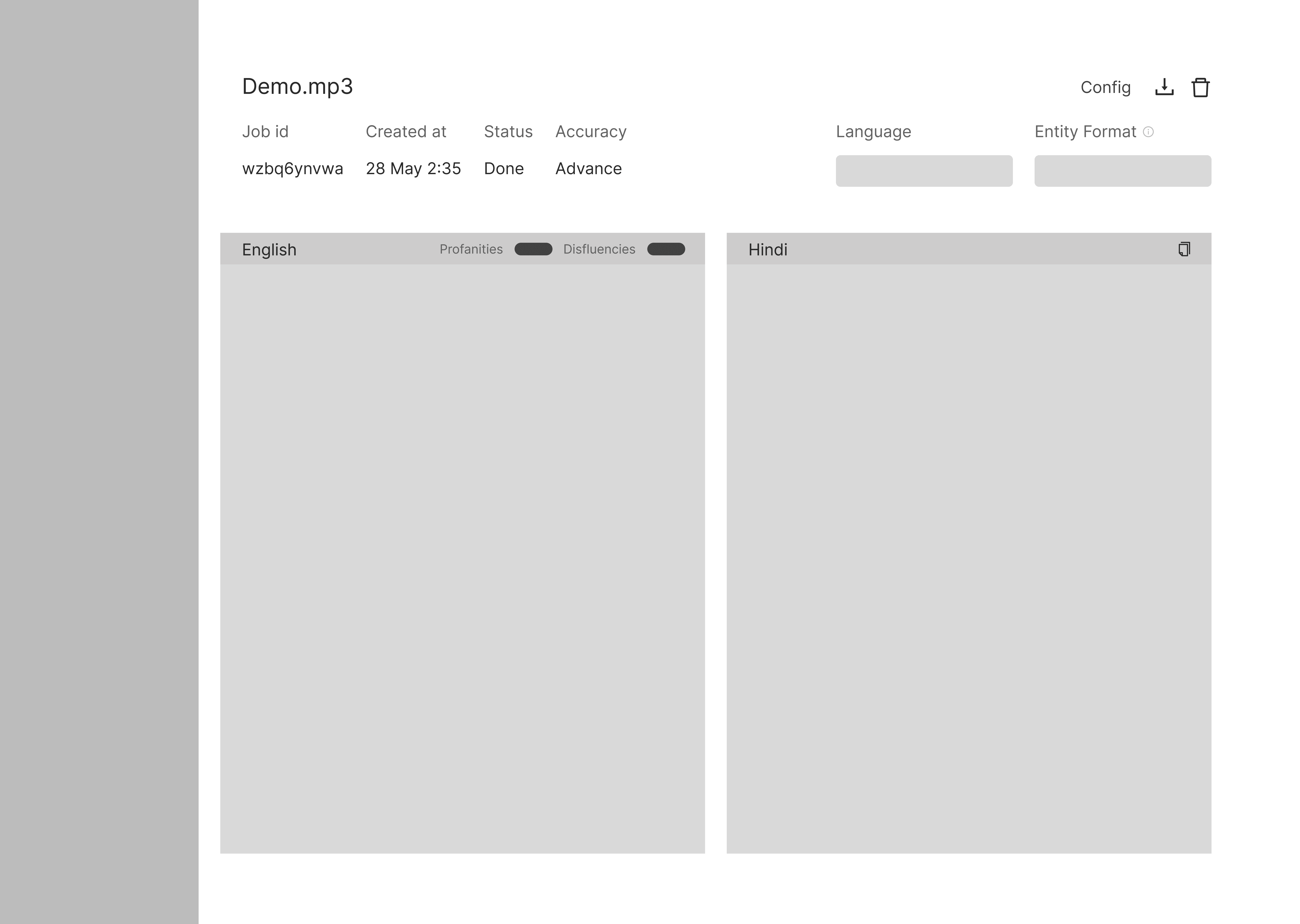

The output screen determined whether the product felt trustworthy. This is where a buyer decided if the transcription was good enough to integrate. I tested how results were surfaced, where speaker labels sat, and how much information was visible without scrolling.

Everything else in lo-fi was relatively straightforward once these two screens were resolved.

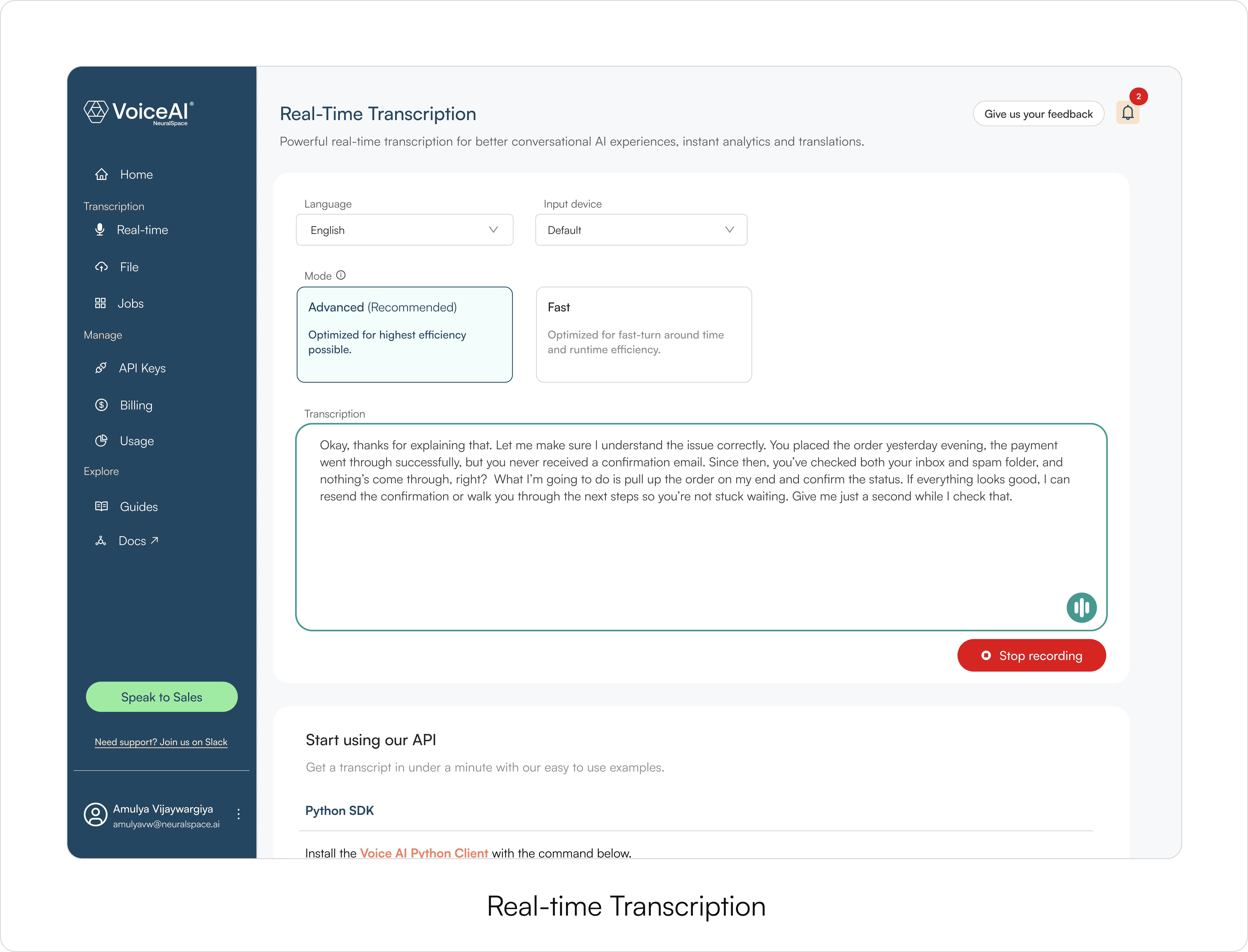

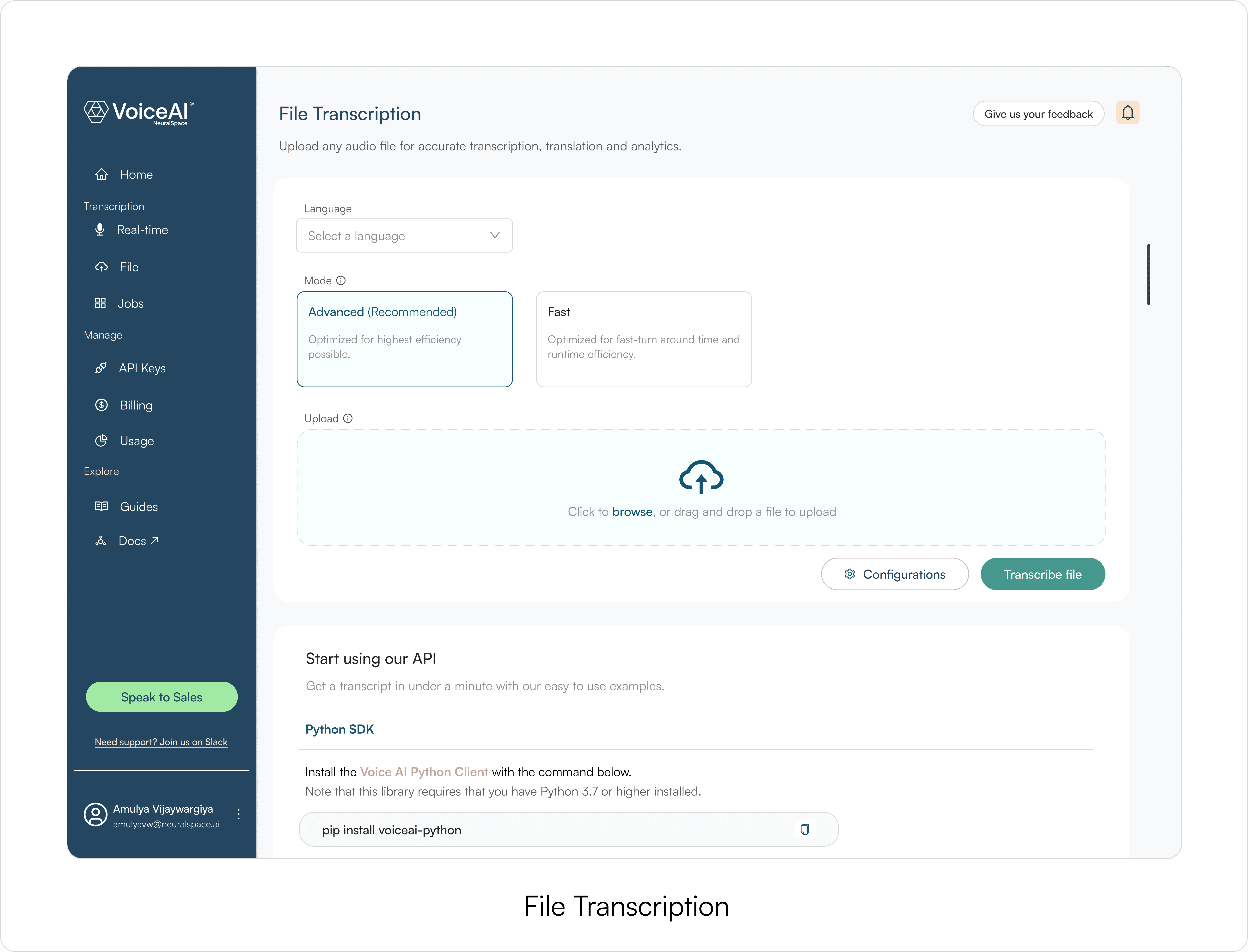

Hi-fi: aligining with rebrand and shipping the MVP

After lo-fi, I moved into high-fidelity using NeuralSpace's rebrand and design system. The focus was minimal UI and output screens easy to scan — especially during live demos where a buyer is deciding in real time.

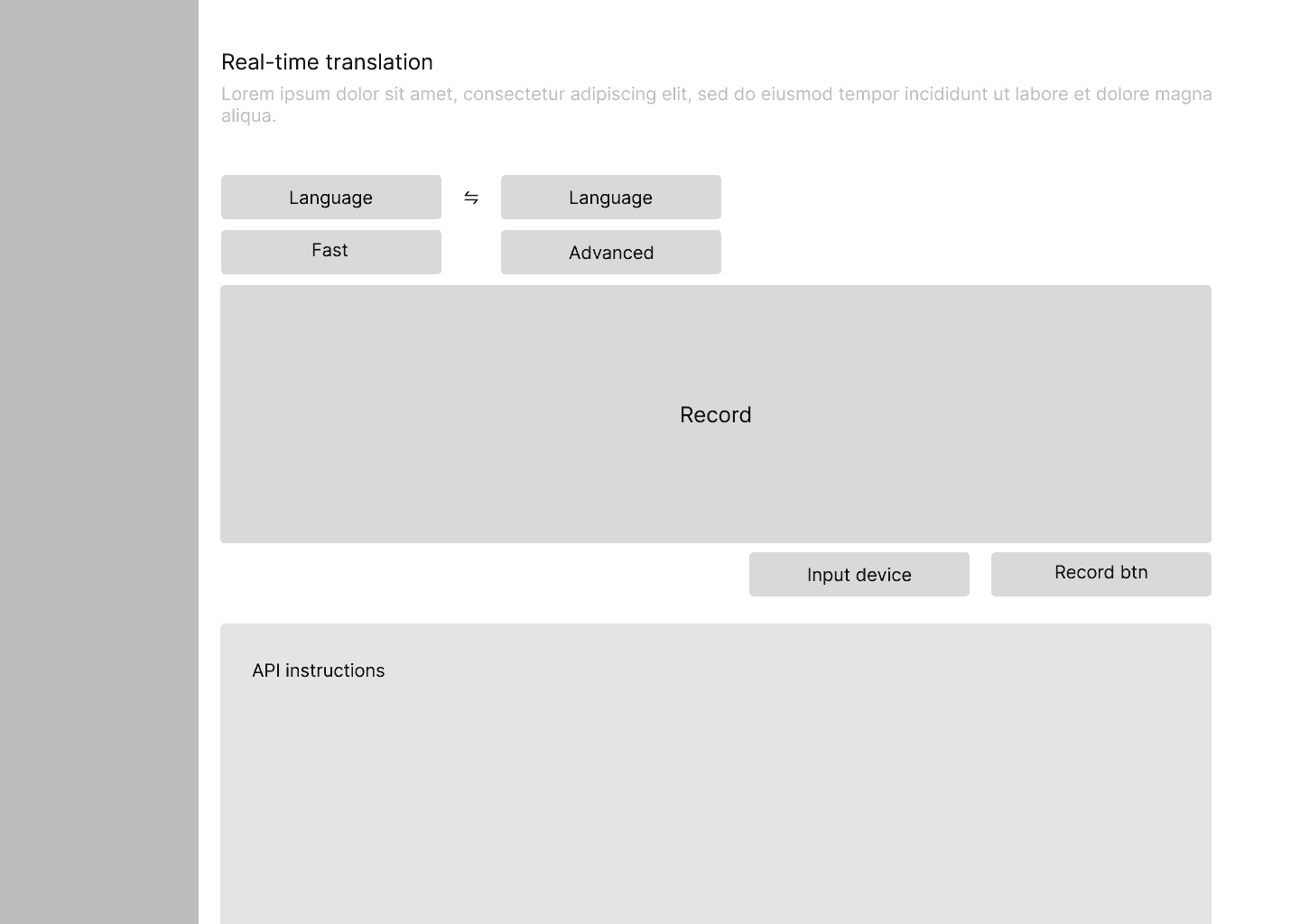

Four workflows in the MVP

Real-time transcription — the primary evaluation use case

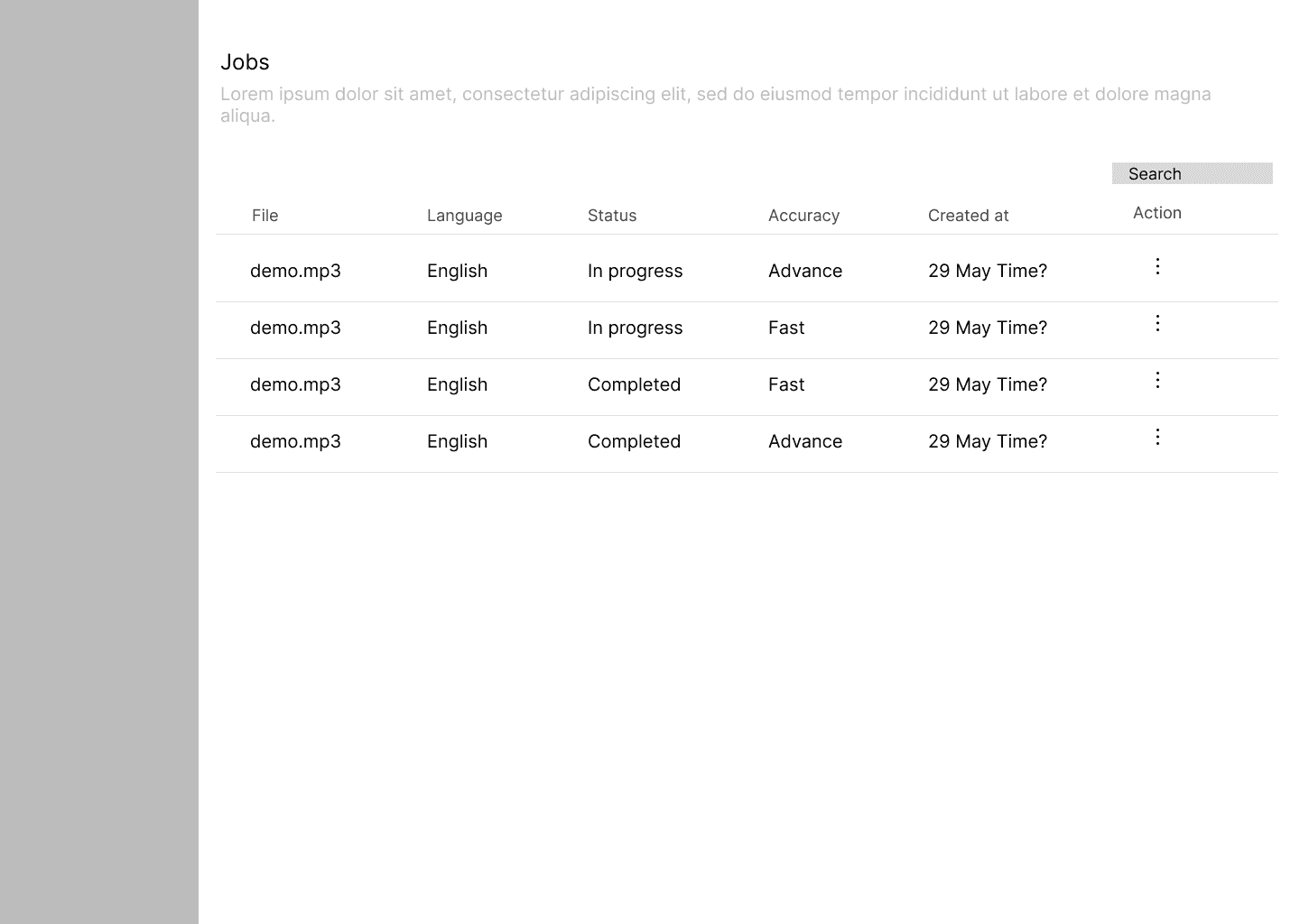

File-based transcription — async, for longer content

Sentiment analysis and transcript summaries

Translation with side-by-side language comparison

<!———

Testing

———>

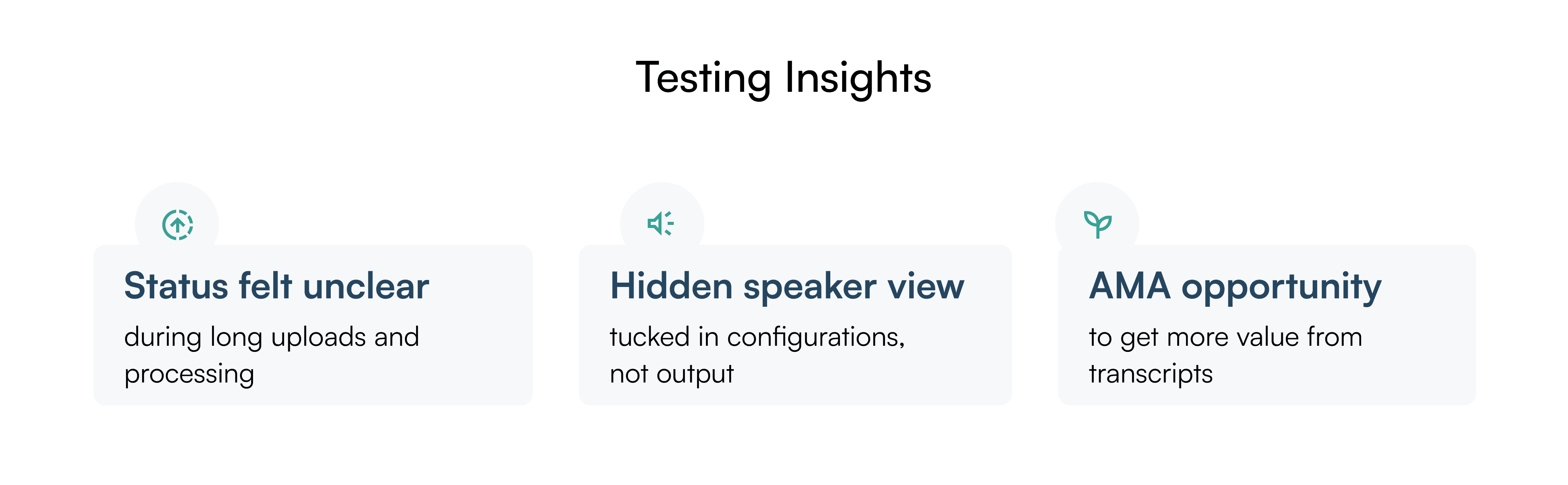

We assumed speaker separation was a power-user feature. Testing proved it was everyone's first move.

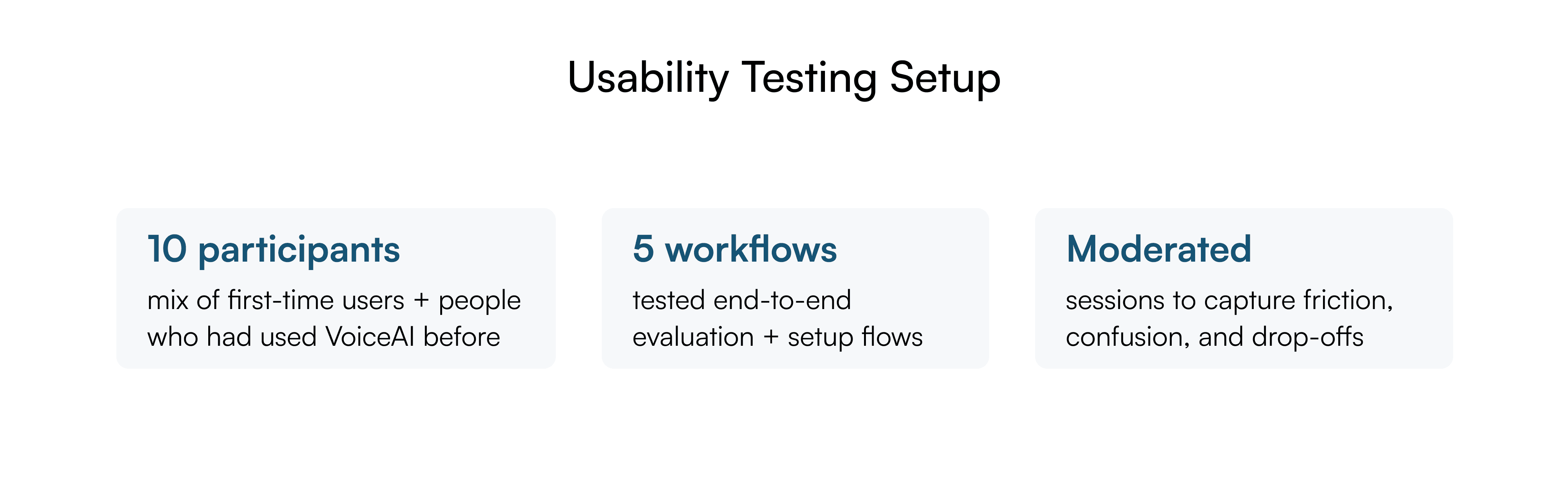

After launch I ran task-based usability testing and tracked two feedback channels: in-app bug reports and the NeuralSpace Slack community.

<!———

Post MVP

———>

We fixed the moments that made users doubt.

A faster way to get value from transcripts

Users in the Slack community were copying transcript text into ChatGPT to ask questions about their recordings.

I built a native Q&A layer — AMA — directly on top of the transcript output to remove that step.

No more guessing during uploads

Drop-off data showed users abandoning during long uploads around the 15-second mark.

No system feedback — the product just looked stuck. I added explicit in-progress states so users always knew the system was working.

Speaker separation moved to where users actually looked

Direct result of the testing finding. Moved from the config panel to a default dropdown on the output screen. Time to access went from 4 clicks to 1. Users stopped reporting it as a missing feature.

Beyond transcription — adding Text-to-Speech

Enterprise sales flagged repeated buyer requests for TTS in Arabic and Hindi dialects — 6 inbound asks in a single week. I designed TTS as a standalone workflow once the signal was clear, supporting NeuralSpace's focus on dialect and pronunciation accuracy for underserved languages.

Together, these changes shifted VoiceAI from a transcription tool into a flexible environment for exploring speech-based workflows.

<!———

Reflection

———>

Beyond the interface — designing the go-to-market

I also worked with marketing to create demo scripts, landing page copy hierarchy, and launch assets. The demo script structured the product walkthrough around one use case: an Arabic call center evaluating transcription accuracy before signing a contract. That framing reduced what sales had to explain in a first call.

What did I learn?

Early GTM involvement makes launch easier

Having sales and marketing aligned from the start meant the product and the demo story were never out of sync. No last-minute scrambling before launch.

Narrow focus, test often

Shipping a focused MVP and testing at every step gave us a clearer picture of what to build next. Trying to do everything at once would have made it harder to see what was actually working.